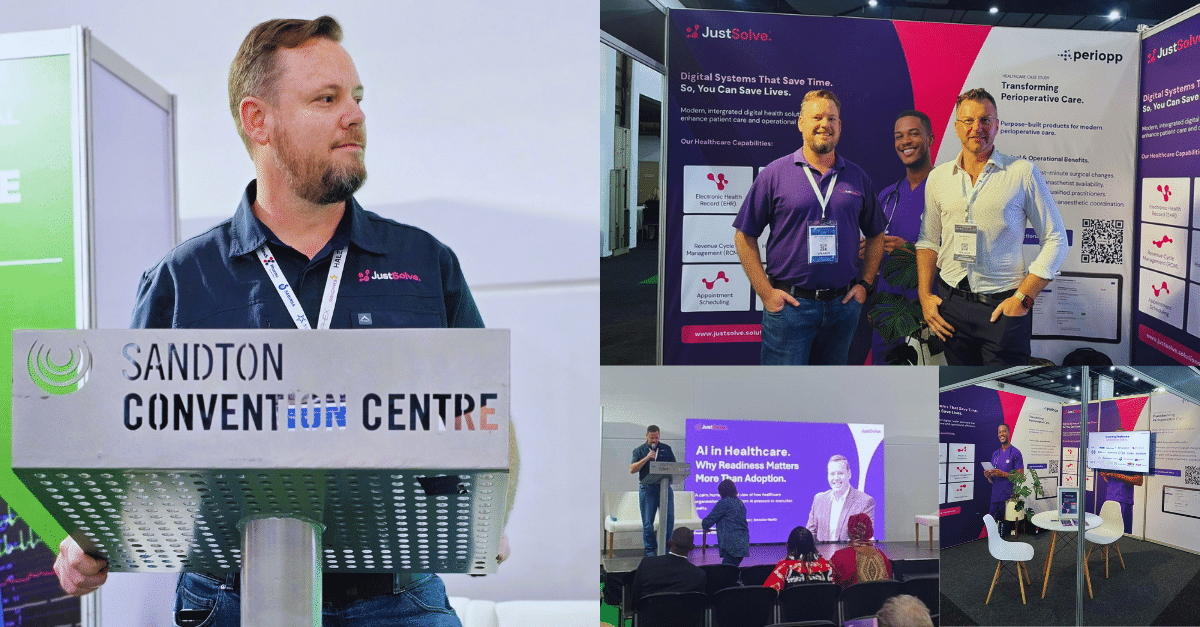

Healthcare leaders are under enormous pressure to “do something with AI”. Boards want progress. Clinicians want relief from administrative burden. Innovators want momentum. Vendors promise acceleration. But between the promise and the reality sits a harder question: what must be true for AI to help rather than harm?

That is where the conversation needs to change. The issue is no longer whether AI will matter in healthcare. It already does. The issue is whether organisations are ready to introduce it safely, govern it responsibly, and embed it in a way that reduces friction instead of multiplying it. In healthcare, readiness is not a technical side note. It is a patient safety issue.

The Adoption Trap.

Too many healthcare organisations are being pushed into an adoption mindset. Adoption sounds positive. It implies progress. It looks decisive. But adoption is often measured by visible activity: a pilot launched, a tool procured, a vendor appointed, a dashboard presented to the board. None of these things, on their own, mean an organisation is ready to operationalise AI safely at scale.

Readiness is different. Readiness asks whether workflows are stable enough to augment. Whether clinicians trust the system. Whether data is usable in the context in which decisions are actually made. Whether governance, escalation paths, and auditability exist before autonomy is introduced. Whether leaders understand not just what the technology can do, but what the organisation can currently absorb.

That distinction matters because AI does not enter a neutral environment. It lands inside a living system of people, clinical routines, workarounds, compliance obligations, handovers, and human judgment. If that system is fragmented, AI does not magically tidy it up. It exposes the fragmentation more quickly.

Why AI-on-Top Fails.

In many organisations, AI is being applied “on top” of existing reality. A model is added to a workflow that was already noisy. A decision-support layer is introduced to a process that already suffers from alert fatigue. A chatbot is launched on top of knowledge that is incomplete, outdated, or disconnected from clinical practice. The result is predictable: more clicks, more uncertainty, more exceptions, and more hidden risk.

AI-on-top fails because it confuses augmentation with accumulation. It adds another layer of interaction without removing the friction underneath. In healthcare, this is especially dangerous. Administrative burden is not abstract. It is carried by people working in high-pressure environments where every interruption competes with patient attention. Every extra click is a tax on clinical attention. Every ambiguous recommendation introduces cognitive load. Every poorly governed workflow erodes trust.

The right response is not to reject AI. It is to reject superficial implementation. Healthcare organisations should be using this moment to redesign how work happens, not merely to digitise what already exists. Intelligent transformation means asking where AI should assist, where it should remain silent, and where human judgment must remain central.

Digital Maturity is Not the Same as AI Readiness.

One of the most common mistakes in healthcare is to assume that data availability or digital tooling automatically equals AI readiness. It does not. A hospital may have an EHR, cloud infrastructure, and multiple digital systems, yet still be poorly prepared for responsible AI use. Data may exist but be fragmented across departments. Clinical processes may vary meaningfully from what the documentation suggests. Governance may be designed for static systems rather than adaptive ones.

A useful way to frame this is to separate digital maturity from AI maturity. Digital maturity asks whether core processes, systems, and information flows have been modernised. AI maturity asks whether those foundations are coherent enough to support augmentation, recommendation, automation, and ongoing learning without destabilising care delivery.

That is why a readiness-led approach matters. It allows leaders to assess not just where AI could create value, but where premature adoption would create fragility. Sometimes the most responsible step is not “launch a pilot”. It is “fix the workflow first”.

What Readiness Actually Means.

In practical terms, healthcare AI readiness can be assessed across five dimensions:

- Process: Are workflows stable, understood, and measurable enough to automate or augment?

- People: Do clinicians, operational leaders, and governance owners trust the system and understand accountability?

- Data: Is information usable in real clinical contexts, not just available in reporting environments?

- Technology: Can systems integrate without introducing fragility, duplication, or unsafe workarounds?

- Governance: Are override paths, auditability, escalation mechanisms, and change controls built in from the start?

This framework is valuable because it grounds AI conversations in reality. Readiness is not about perfection. It is about knowing the current state honestly enough to decide where AI should assist and where it should not. That honesty is often what separates responsible progress from expensive theatre.

Burnout, Friction, and the Human Cost.

Healthcare burnout is often discussed as if technology can simply “solve” it. In truth, badly designed technology can make it worse. Burnout does not come only from workload. It also comes from friction: extra screens, extra handoffs, extra alerts, duplicate entry, poor timing, ambiguous responsibility, and constant interruptions to flow. Bad AI increases those pressures. Good AI removes them quietly.

This is why human-centred design is not optional in healthcare transformation. AI should reduce administrative drag, support confidence, and create a calmer experience for clinicians. If it cannot do that, then it is not ready for that environment. The measure of success is not the sophistication of the model. It is whether the frontline experience improves without compromising trust or safety.

Human Judgment Must Remain Central.

Healthcare decisions do not happen in a vacuum. They happen in context. A recommendation is not a decision. A probability is not accountability. A well-performing model still needs to exist inside a system where human judgment, escalation, and override are preserved. Full automation can be tempting, particularly where staffing pressures are real, but temptation is not a governance strategy.

The most trusted AI systems do not remove humans. They elevate them. They improve information flow. They surface relevant context. They reduce the time spent on low-value administrative tasks. They make the human decision-maker more effective without pretending that judgment can be outsourced entirely. Trust in AI is not earned through accuracy alone. It is earned through transparency and control.

What Leadership Should Do Next.

Healthcare leaders broadly face three paths.

- The first is to freeze: To do nothing until the market feels safer.

- The second is FOMO: To move quickly because everyone else appears to be moving.

- The third is deliberate, readiness-led execution. This third path is harder because it asks for patience, discipline, and uncomfortable honesty. But it is the only path that creates sustainable value.

A readiness-led path typically begins with a clear understanding of current processes, information flows, friction points, and governance gaps. It identifies where AI could remove burden, support decision-making, or improve service delivery. It does not force AI into every use case. It sequences transformation logically: capture intent, establish foundations, orchestrate workflows, then scale and govern ongoing operations. That is how intelligent transformation becomes real rather than performative.

Moving Beyond the AI Hype.

Healthcare does not need more AI enthusiasm. It needs more execution maturity. That means the conversation must evolve from “How quickly can we adopt?” to “How responsibly can we operationalise?” It means understanding that adopting AI too early can be just as risky as adopting it too late. It means treating readiness as a patient safety issue, not a procurement milestone.

The future of healthcare will absolutely include AI. But the organisations that benefit most will not necessarily be the first to adopt. They will be the ones that introduce intelligence into care environments with humility, discipline, and respect for the humans who must trust and use these systems every day. The goal is not to be first. The goal is to be trusted.

That is the real shift now in front of healthcare leaders: from adoption to readiness, from experimentation to execution, and from hype to responsible, human-centred intelligent transformation.